Since I did performance tests and I saw that, for example, in my case, FortInte makes a request to make it 10,000 ms of timer resolution, Synapse 3 makes another request to make it 20,000 ms and ISLC makes a request to make it 5000 ms, so which one takes for good windows? because although in ISLC it says that the current one is 0.5 it does not mean that it is really assigned like that in windows I think.

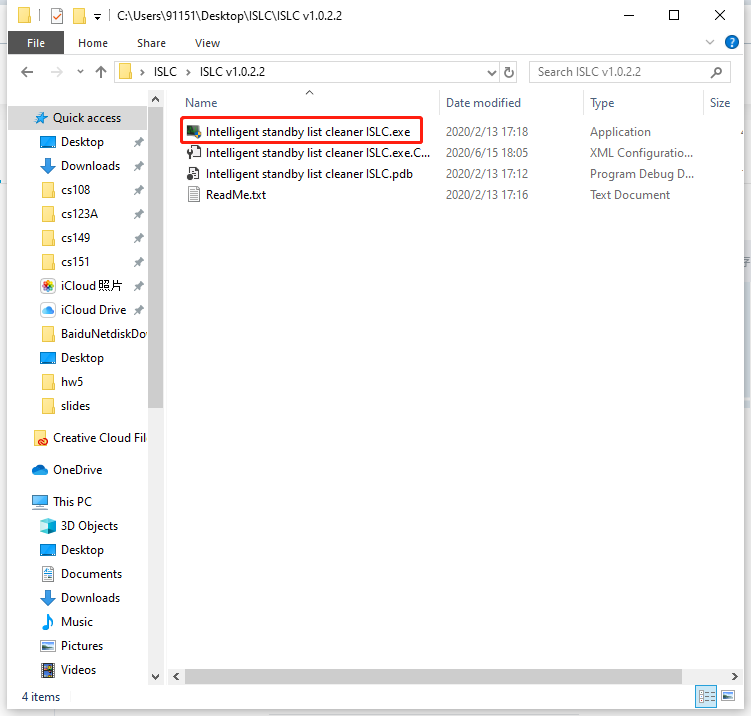

I have some doubts about a function of this program, specifically the timer resolution, as configured and you can see in the program the maximum timer resolution is set to 0.50 ms and the minimum is set by default to 15,625 ms, I am the one The only one who has noticed that the minimum is much greater than the maximum? To explain myself better, these values should be reversed, minimum 0.50 ms and maximum 15,625 ms because otherwise it doesn't make sense that the minimum latency in milliseconds is much higher than the maximum.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed